(CrowdFlower : 2/14-4/14)

Contributors, as they are called, are the +5M people around the world who do work on CrowdFlower's platform.

The application that enables them to do work is one of the company's heavily trafficked as well as most complicated -

blending a Rails backend with MooTools, jQuery, and RequireJS in the frontend.

The application's UX

...had largely stayed the same for the last five years. In Q1/2014, we decided to

enhance it by making it more interactive and towards engaging our users more and conveying the just

how much work there is in our system.

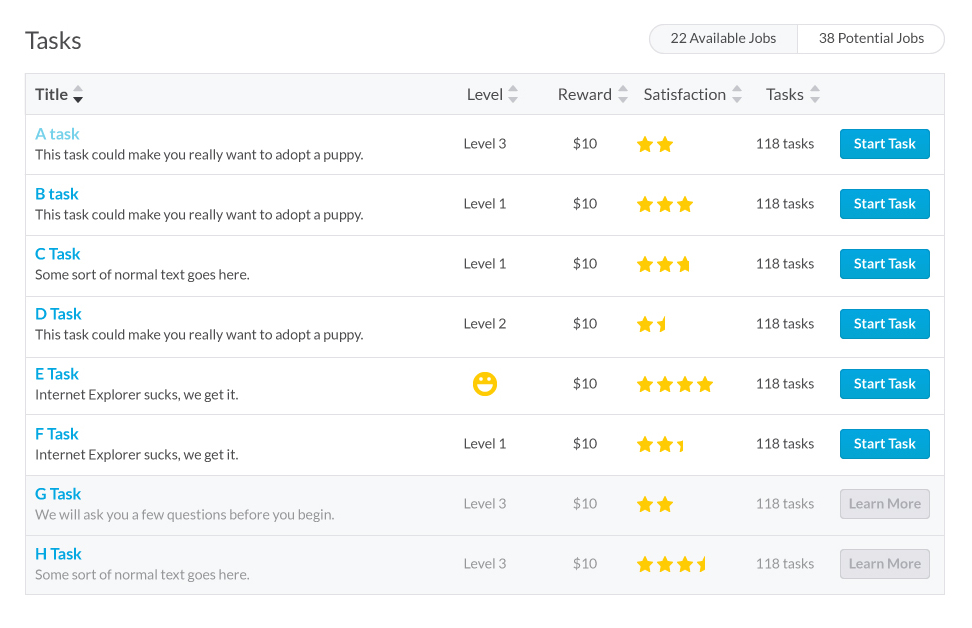

Working with the Product Manager and an external Designer, we came up with the following high-resolution mock

Because the application is so heavily used, we knew we couldn't merely throw the switch on

a new design overnight; both from a community management standpoint as well as application performance.

Instead, we chose a strategy of introducing a first at the company: use of A/B Testing to determine

a design that would perform as well as if not better than the original.

Our key metric for performance in that regard had to do with contributor's performance after

being exposed to the new UX, particular the messaging around our forthcoming gamification and introduction

of Levels.

In the beginning, we did not have the infrastructure to determine

the value for that metric so we simply settled on 'clicks' as a (conversion) proxy to understand if the new

design was having an impact.

Without an A/B Testing framework in place, I needed to choose one. As requirements were not

concrete for such, I did some due diligence in vetting several options,

coming up with a review of A/B testing frameworks for Rails.

It became obvious that Vanity was best suited to our needs. (Since

it doesn't yet have the ability to throttle a percentage of the traffic receiving experiments, I

augmented it with Flipper.)

Once that was in place, we could begin iterating on the design, knowing with confidence

how we were impacting the user experience.

We knew we wanted the experience to be snappy, but completely replacing the existing experience

with a Rich Internet Application was far out of the scope for the first month, particularly as there

were infrastructure changes to be made to retrofit the stack with A/B Testing. We

decided to make progress iteratively over several sprints.

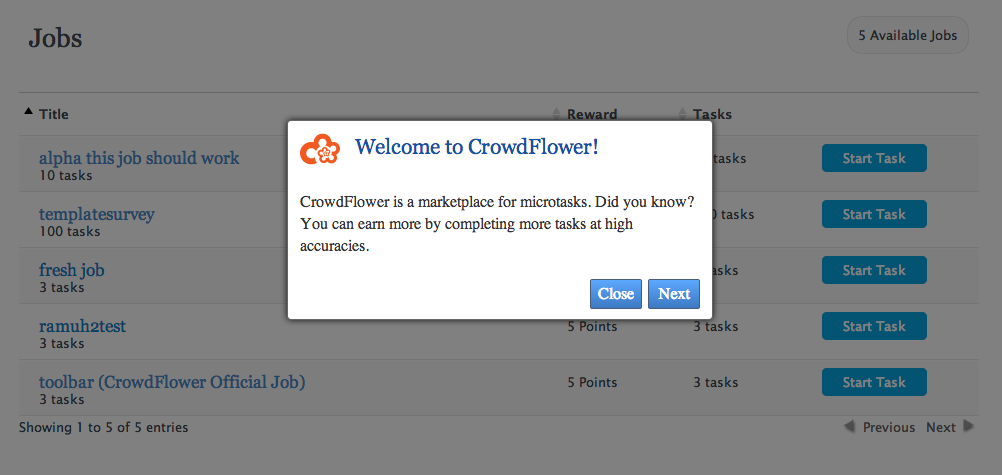

In our first test, we pitted the control (original) against a bare-bones implementation version of

the high-resolution mock as the new design.

The new version out-performed control (in terms of clicks) 21.3% vs 20.3% (at 95% confidence)

so I continued to iterate on the implementation, coming up with the following

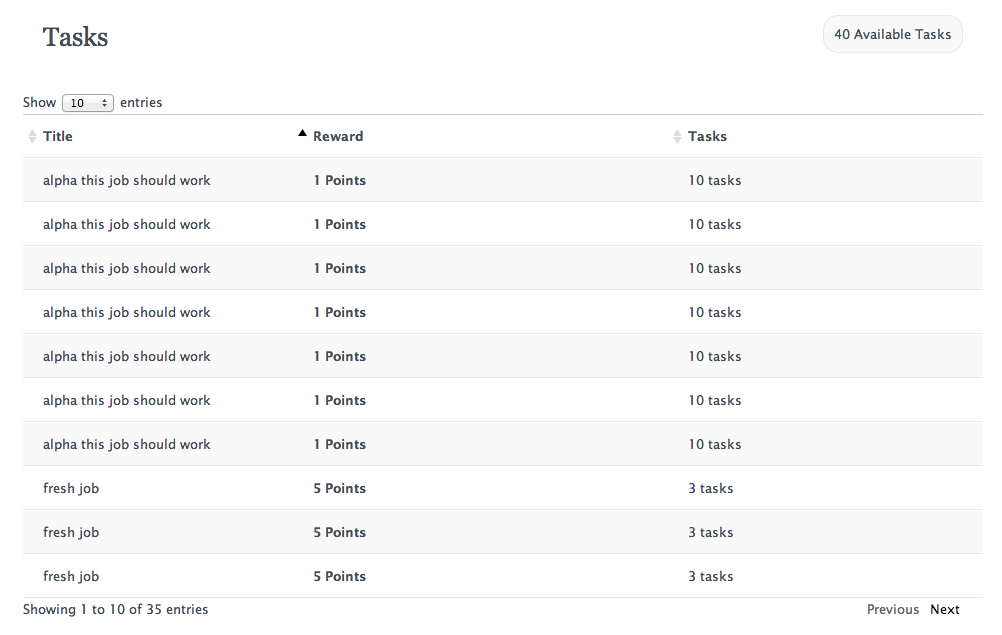

To calculate the overall satisfaction by other contributors for a task (denoted by the stars)

proved to be too inefficient in this iteration; it wound up losing.

On the assumption that we needed to make the experience snappier in order to drive engagement,

it was obvious that we would need to have more (and faster) interaction and therefore, an

interactive client-side implementation.

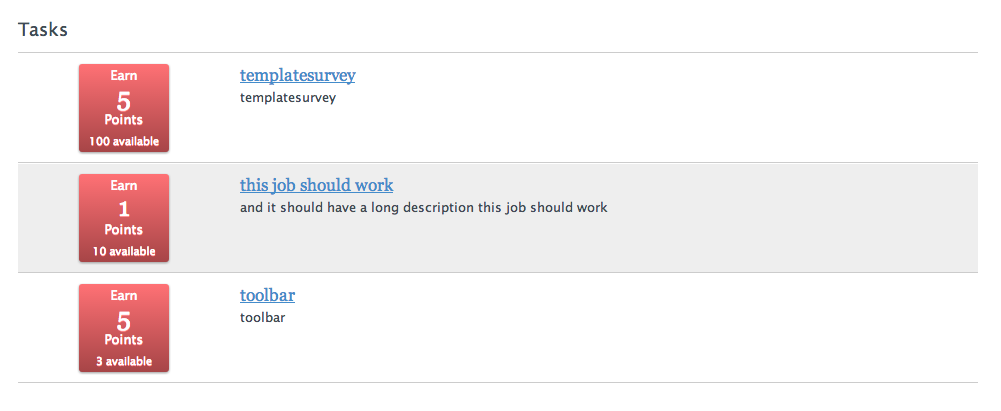

As what was essentially a completely parallel product, leveraging only some of the infrastructure that

the server-side rendition was utiziling, I begin to flesh out the following

Further refinement (an actual data) was necessary to get it looking more like the high-res mock (and like

its server-side-rendered peer)

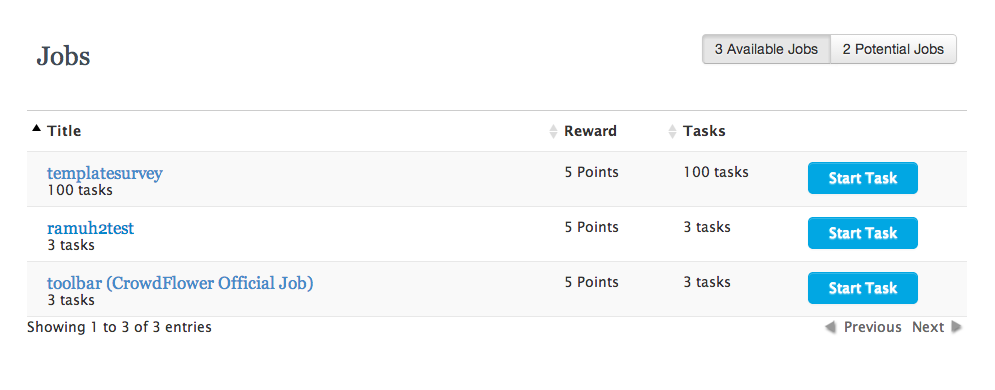

At this point, we implemented and integrated with our own homemade badging solution, beginning

to display badges in the following iteration

The new version out-performed control (in terms of clicks) 21.3% vs 20.3% (at 95% confidence)

so I continued to iterate on the implementation, coming up with the following

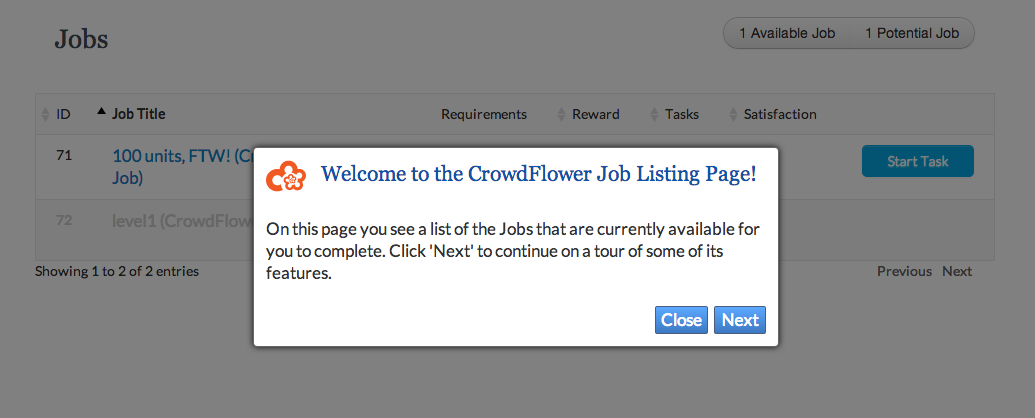

Testing the impact of particular messaging was also of interest, so we added a Guiders variation as well.

At this time we also leveraged Google Analytics Events on the Guider buttons to track how the

far the user got in our messaging.

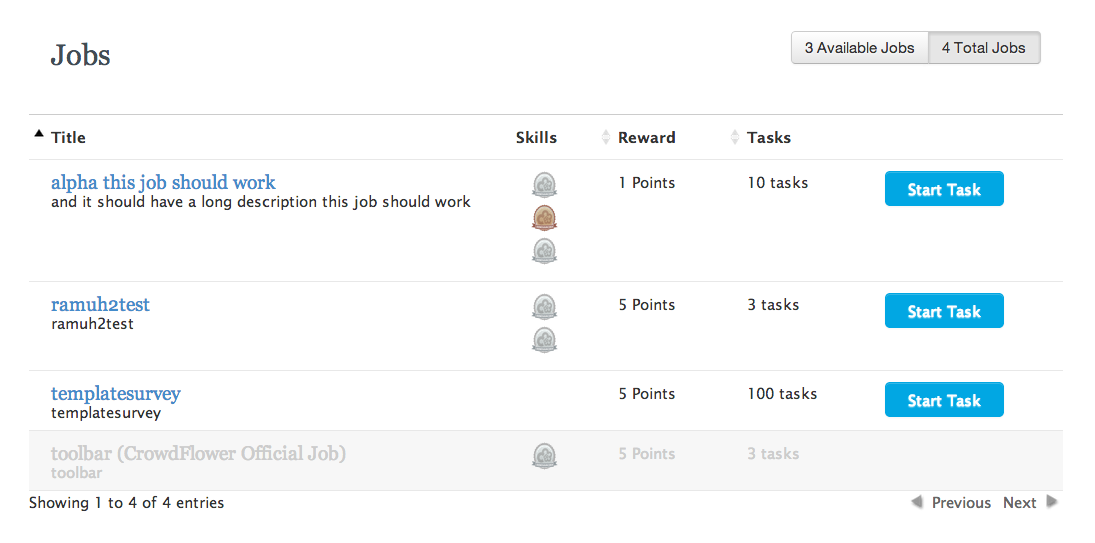

Letting the experiments run a few days with sufficent traffic, we found that client-side-rendered version peformed no worse than

the server-side-rendered version (23.9% vs 22.9%) and that having guiders also performed not

significantly worse (23.1% vs 23.7%) so we decided to keep both.

By that time, the new version was out-performing control (the original design) 22.2% vs 20.7% (at 99% confidence)

so a decision was made to move forward rolling out the new experience to 100% of contributors, doing

some polishing (copy/styling) work before finally settling on the following

Infrastructure

Server-side

Client-side

Results

- Used A/B testing to upgrade company's most highly-trafficked page (5+M views/month,) increasing user engagement by 5% and saving $2K/month (in Bunchball costs) by rolling own simple badging solution.

Technologies

- AWS

- Capistrano

- CSS3

- DataTables

- Flipper

- Heroku

- jQuery

- Mixpanel

- New Relic

- Nginx

- Optimizely

- Rails

- RequireJS

- Ruby

- Vanity